I’m back at the RE•WORK DL summit again this year to catch up with some of the hottest research in Deep Learning (DL). I’ve shared a reading list at the end of this article that is related to the main track sessions I attended.

Of particular interest to me was a talk by Richard Turner that uses adaptive networks to allow continuous training with new data instead of having to re-train from scratch.

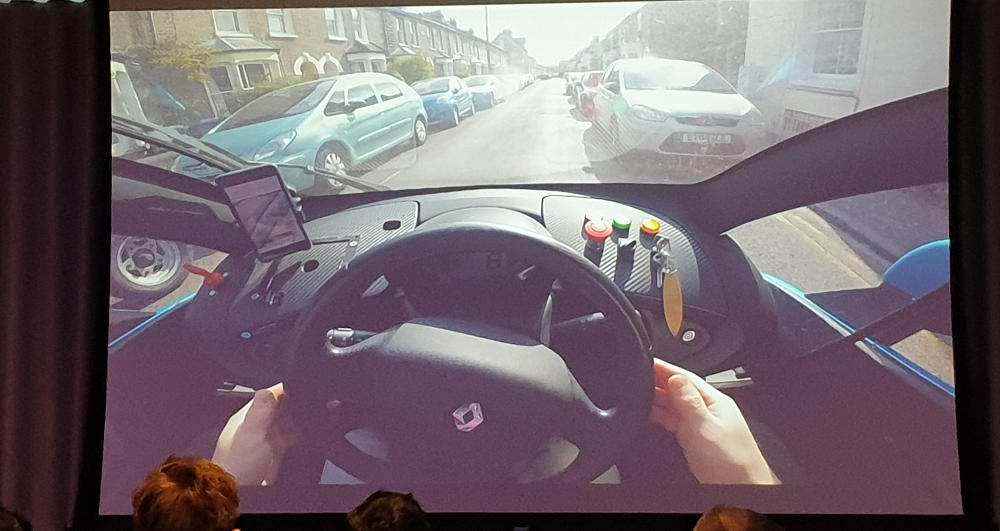

Reinforcement Learning was heavily featured this time as researchers coax DL algorithms to paint, control robots, drive, and play starcraft. There was also a variety of talks related to autonomous driving that range from making sense of sensor data to controlling the car itself.

In the talks, the problem of data inefficiency is an issue that came up often, for example Alphastar AI agents are trained for over 200 gaming years to be able to compete with the best players. Another important issue that remains unsolved, especially for safty critical systems, is how to ensure that the algorithms behave sensibly in all situations, even in the rare cases where there’s little or no available training data.

Prof. Neil Lawrence’s talk on Machine Learning Systems Design highlights these problems, stating that we’re experiencing a ‘data crisis’. In reference to Edsger Dijkstra’s Software crisis whereby software gets more complex (and more expensive) in tandem with compute power, now that software is generated by data, more and more effort needs to be spent to improving and verifying the dataset. In addition to increased focus on data, it is also important to ensure traceability of training process and that deployed systems are constantly monitored to detect anomalous inputs and outputs.

The conference ended with a panel talk on AI explainability. Now that transparency of decision made by AI is required by UK’s GDPR law, companies need to start actively work on explaining their algorithms. The panel has a consensus that, yes it’s an important issue and the industry is working on improving their practices. There was a suggestion for exploring the use simpler models first (e.g. regression, decision trees) where it is easier to explain the correlation between inputs and prediction. More details can be found in ICO’s interim report on AI explainability which is definitely worth a read.

That’s a wrap for this year’s conference report, I hope it’s been interesting and/or useful to you. If you’re thinking of applying DL to your research and not sure where to start, visit our DL resources page and lookout for our upcoming DL training sessions by signing up to our mailing list. We also run fortnightly code clinic sessions and may be able to help if you’re stuck on DL or more general research computing problems.

Below is a list of reading materials and resources that came up in the conference:

For queries relating to collaborating with the RSE team on projects: rse@sheffield.ac.uk

Information and access to Bede.

Join our mailing list so as to be notified when we advertise talks and workshops by subscribing to this Google Group.

Queries regarding free research computing support/guidance should be raised via our Code clinic or directed to the University IT helpdesk.

List of archived pages: Archive.