I worked in commercial I.T. for a few years before doing an PhD and spending many years in scientific research. Coming back to software full time is a bit like coming out of cryo-stasis: Technology has moved on in ways I could not possibly have imagined and I must adapt like some kind of sci-fi person. One of the big changes over the last few years has been the increased use of virtual machines (e.g. using Virtualbox) and containers (Docker is a popular choice, Singularity may confer advantages in High Performance Computing (HPC)). I now feel ready to talk about this with others.

If you’ve persevered to this point in the post, you deserve an explanation of what virtual machines and containers actually are. But you are going to have to wait until I’ve explained how software interacts with “normal” hardware such as a laptop.

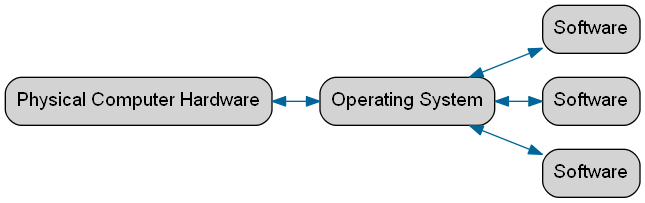

These are physical computers made of glass, plastic and metal. The physical hardware resources (processor, memory, storage, screen) that make up a computer are (generally) given instructions by the software it runs via an operating system such as Windows or Linux. Software that has been engineered for one operating system (generally) will not work on another.

When software is installed, typically a bunch of files are copied onto the computer and some other files are altered. Yet more prerequisite files might be downloaded from another source. A problem arises if, by installing one piece of software, a file on which another piece of software depends is altered or removed. This can be accidental or malicious. Malicious software can be deliberately designed to access data which should remain private to other software.

Another potential problem is a situation where the software runs, but behaves differently on different computers. This could involve the software crashing, or perhaps producing slightly different results — a big problem when trying to make results reproducible.

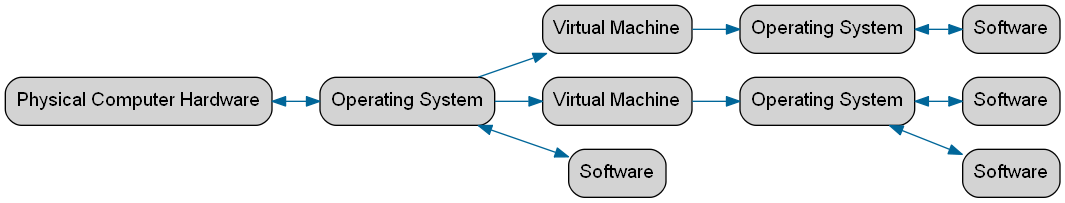

A virtual machine (or VM) is, in effect, a piece of software running on a physical computer, that supports another operating system. A computer in the imagination of another.

Software can then be run on the virtual machine. Much as multiple pieces of software can be installed on a single physical computer, so can multiple virtual machines. And multiple pieces of software can be installed on each virtual machine. All this comes at a performance cost - software running on a virtual machine will not generally go as fast as the same running directly on physical hardware. So why bother with all this complexity?

So virtual machines can be really helpful, but they can be slow to run and also slow to configure.

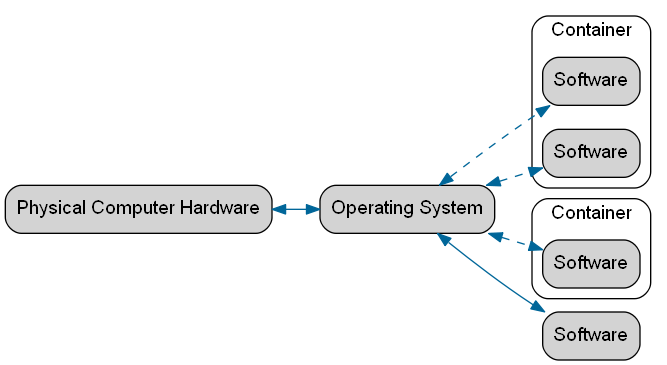

Containers get round some of the problems with virtual machines. The term “container” is not always defined in exactly the same way. Containers allow software running in them to access hardware more directly than a virtual machine, but restrict what hardware they can use and interaction between discrete containers. This confers many of the advantages of using virtual machines. However,the key technical difference between a container and a virtual machine is that a container does not (generally) have its own operating system. This means they avoid some of the slowdown associated with virtual machines, but can still be configured and replicated to ensure that software runs consistently.

The great successes of containers are in:

So far, so good. But probably not good enough to justify the current buzz around these technologies. I think one of the key benefits that we’ve not mentioned is how virtual machines and containers can be set up to work together.

Vagrant, for example, can be used to create and provision (install software, write configuration files, mount network drives, etc.) any number of virtual machines with the following:

vagrant up

Easy, eh? Every aspect of the configuration is defined as software (this is “infrastructure as software”). I use this to configure linked database server — web server pairs. I can use the same approach to configure virtual machines on my laptop for testing, for development servers and for live applications. Doing this with physical hardware would be epic, and the configurations would drift apart through updates and bug fixes in milliseconds (not really, but quickly though).

Kubernetes, for example, takes this to perhaps even greater levels. This allows deployment of containers grouped into blocks with control of the level of interaction within and between these. This is great for scaling up to huge and variable numbers of software users by allowing hardware resources to be dynamically re-allocated to different applications.

Most of the technologies I’ve talked about are available to download and experiment with. If you think your software might benefit from running on a virtual machine or in a container and want some advice, consider an RSE code clinic.

For queries relating to collaborating with the RSE team on projects: rse@sheffield.ac.uk

Information and access to Bede.

Join our mailing list so as to be notified when we advertise talks and workshops by subscribing to this Google Group.

Queries regarding free research computing support/guidance should be raised via our Code clinic or directed to the University IT helpdesk.

List of archived pages: Archive.